Normalization: If basic normalization is enabled, Airbyte uses the raw JSON data to create a schema and tables that are more suitable for the destination's data format.Raw Data Preservation: The _airbyte_raw_ table ensures that the original data is preserved in its raw form, adhering to the ELT philosophy of not altering data during the extract and load processes.This table is crucial for maintaining the integrity of the raw data before any transformation occurs. The _airbyte_raw_ table serves as the initial landing point for data synced by Airbyte, especially when dealing with JSON blob data from various sources. Normalization is a powerful feature that enhances the utility of data within Airbyte's ELT framework, providing a foundation for robust data analysis and decision-making. "_airbyte_emitted_at" TIMESTAMP_WITH_TIMEZONE, Given a JSON object from a source, basic normalization would create a structured table in the destination, mapping JSON fields to table columns with appropriate data types. Custom transformations can be built on top of Airbyte's output.Normalization may increase compute costs at the destination.Customization is possible through SQL views or custom dbt projects.Basic normalization can be toggled on or off in the connection settings.The transformed data is then ready for further analysis or transformation.If enabled, basic normalization structures the data into tables and columns.Data is extracted from the source and loaded into the destination as a JSON blob.Analytics Readiness: Prepares data for use in analytics tools.Structured Data: Converts JSON into columns for easier analysis.

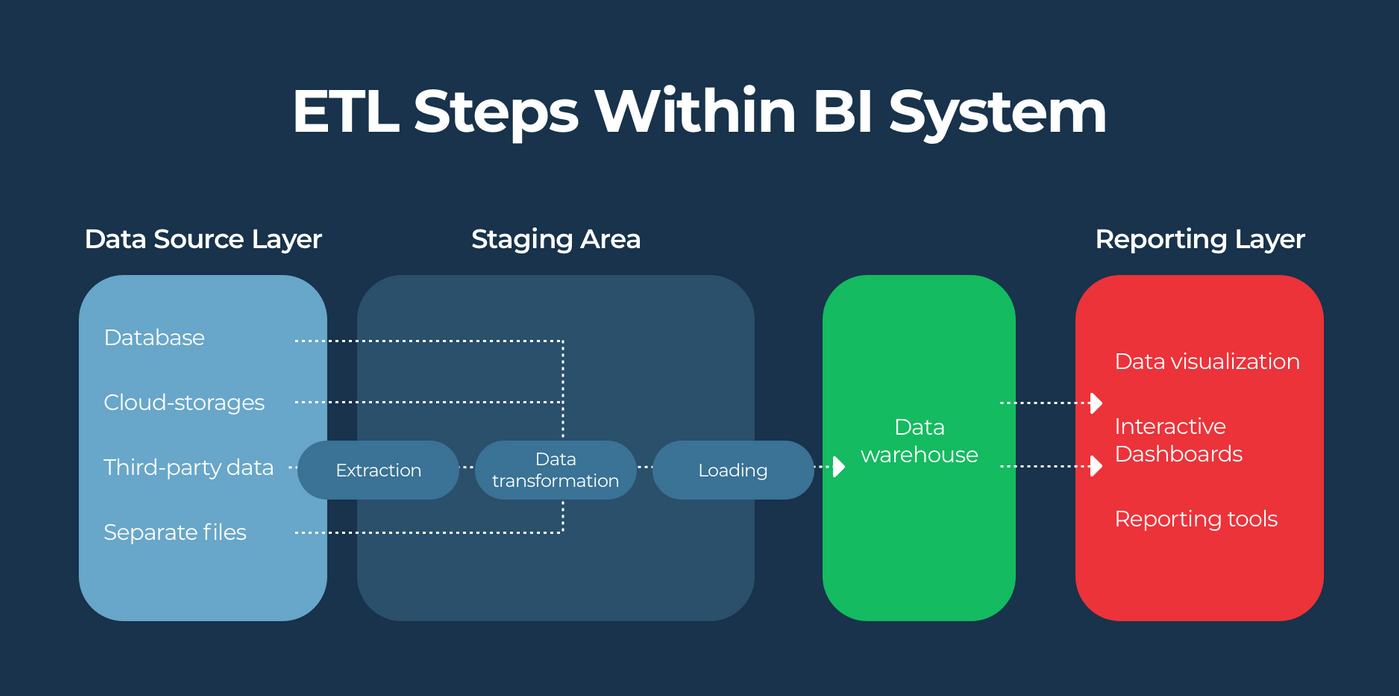

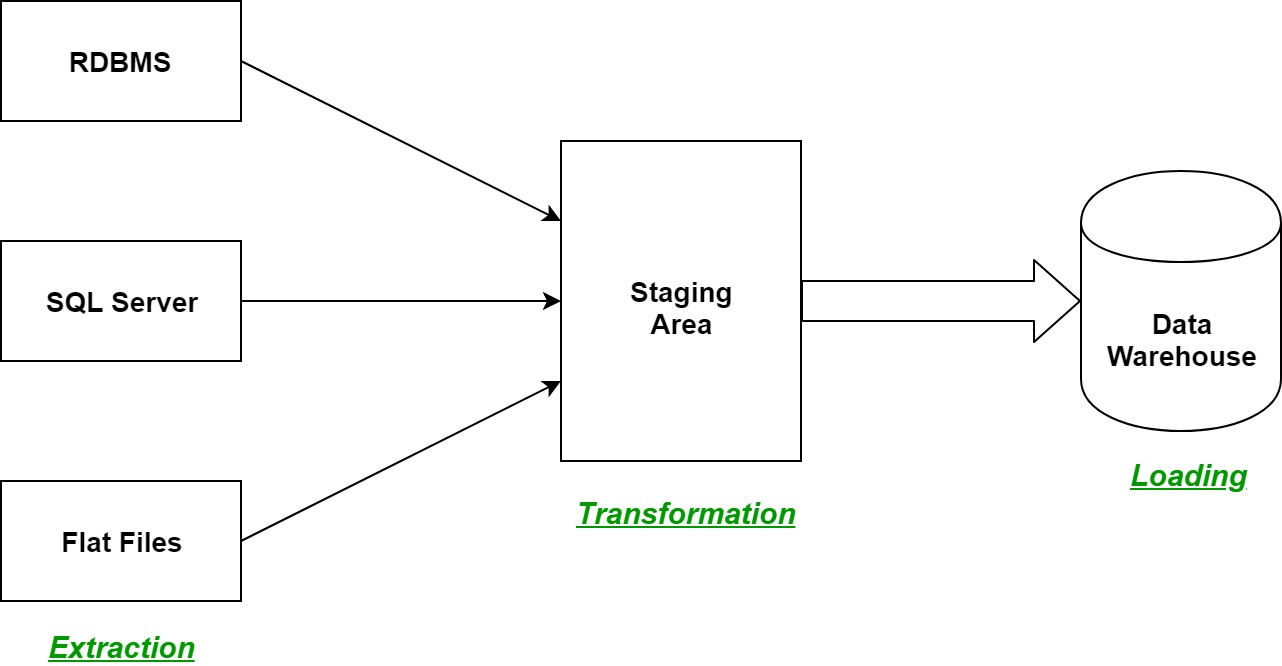

dbt Transformer: Used by Airbyte to perform basic normalization.Normalization: The transformation of JSON blobs into structured tables.ELT Process: Airbyte focuses on the 'EL' with an optional 'T' for transformation.Airbyte leverages a dbt Transformer for this purpose, generating dbt SQL model files and executing them on supported destinations. This process, which occurs after data extraction and loading, is known as the 'T' in ELT-transformation. This can be challenging, particularly when dealing with complex data structures or integration requirements.Airbyte Basic Normalization is a crucial step in the ELT process, transforming JSON blobs into structured tables suitable for analytics. Integration with other systems: ETL processes often involve integrating data with other systems, such as data warehouses, analytics platforms, or business intelligence tools. Organizations want to ensure that data is protected and accessed only by authorized users, with increased complexity when dealing with large volumes of data or data from multiple sources.ĭata governance and compliance: data governance policies and regulations stipulate how data provenance, privacy, and security is to be maintained, with additional complexity arising from integration of complex data sets or data subject to multiple disparate regulations.ĭata transformation and cleansing: ETL processes often require significant data transformation and cleansing in order to prepare data for analysis or integration with other systems. Security and privacy: ETL processes often involve sensitive or confidential data. what the original source was) can be difficult once data sources are integrated.Īvailability and scale: Is there enough storage and compute in your staging area to keep up with the data? (The more data that needs to be transformed, the more computationally and storage intensive it can become.)įiltering: Which data is important data and which can be ignored or discarded? Reasoning about the lineage of data (i.e. It can be difficult to identify and correct errors or inconsistencies in the data. Permissions: Do your networks and systems have access and rights to the data?ĭata freshness: Are you capturing real-time data, or stale data that's no longer of value? What is the ephemeral nature of the data? Are you able to capture it before the data passes its lifetime?ĭata quality and integrity: Do you have validation in place to notice if the data that is extracted is in an expected form? Combining data from multiple sources can be challenging due to differences in data formats, structures, and definitions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed